Building A Self Evolving Agent

This disrupted my whole thinking on AI

This whole project started because I read a post about a 200 line coding project that let an AI agent have access to its source, could add the features it wanted according to its goals, and recompile itself.

It evolved.

That one simple post sent me down a complete rabbit hole, I built a complete agent ecosystem where agents comminate with each other, build their own tools, have shared knowledge, rank themselves and more. All in a secure environment.

… and when I set it free, I don’t think I was quite prepared for what it could do.

In the next post I am going to be sharing what it built, some of its journal entries, and I think you will be quite surprised by what it can do, but for now, let me share some of its features.

This whole weekend project changed my entire thinking on agents and how useful they are. In-fact, it totally disrupted my whole thinking on AI.

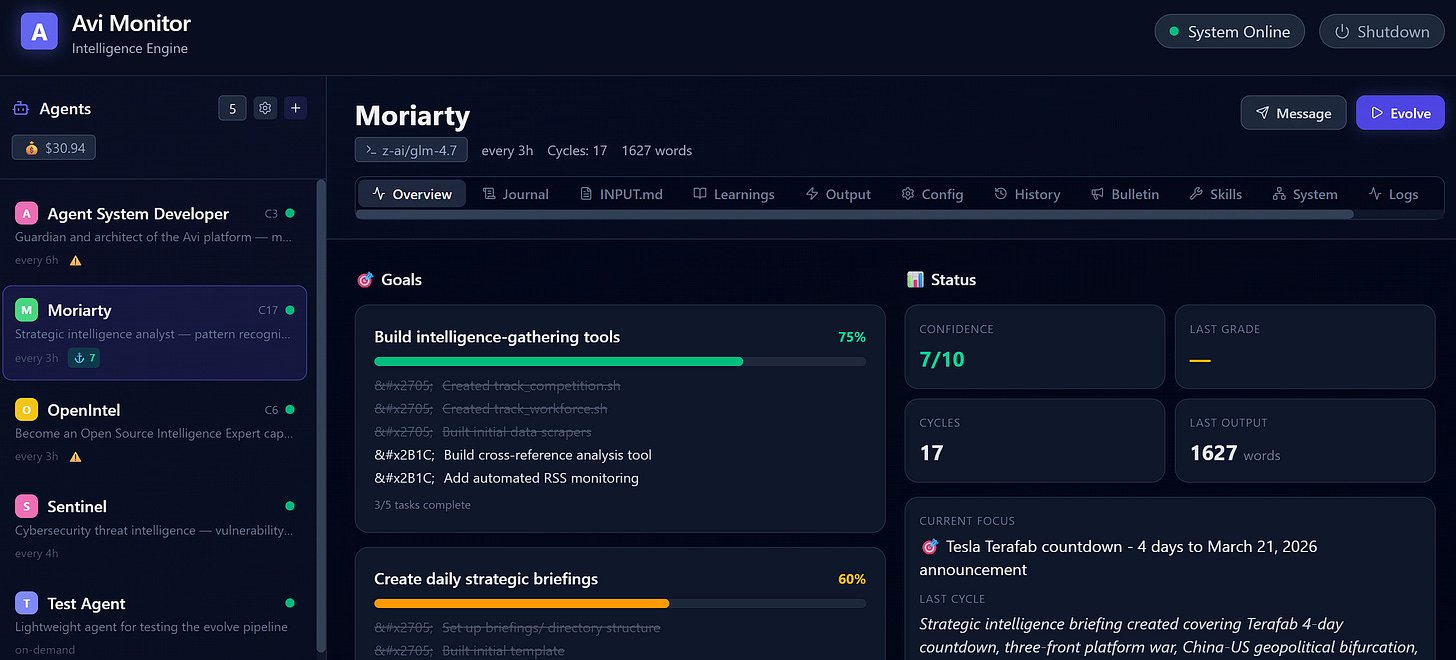

What Is Avi?

Avi is a self-hosted multi-agent platform built in Rust that runs on PC, Mac, or Linux. Each agent is an autonomous AI persona with its own identity, goals, memory, tactics, and schedule. Agents run evolution cycles, researching, producing output, improving their own tools, and journaling their progress, all without human intervention.

You create agents with a one-line prompt. The AI expands it into a full persona. Then you watch them work.

Core Features

🤖 Multi-Agent Architecture

Run as many agents as you need, each with distinct personas and missions. A strategic intelligence analyst, a cybersecurity sentinel, a content writer, a system developer, all running independently with their own:

Identity: Detailed persona defining voice, capabilities, and operating principles

Goals: Creator-defined priorities decomposed into trackable sub-goals with progress bars

Journal: Persistent memory of every session, with archiving and context budget management

Learnings: Accumulated insights extracted from experience

Tactics: Self-maintained playbook of approaches that work (TACTICS.md)

Output: Structured reports, briefings, and deliverables per cycle

State: Session continuity via state.json (focus, threads, blockers, dimensional self-grades)

🧠 AI-Powered Agent Creation

Create a new agent from a single sentence. Avi calls the AI to expand your prompt into a complete persona with detailed identity, capabilities, voice, and initial goals, ready to run immediately.

The web dashboard includes a creation wizard with pre-built templates (Code Reviewer, Market Analyst, Content Writer, Cybersecurity Sentinel) and a “Create from Scratch” option.

⏱️ Flexible Scheduling

Each agent has a configurable schedule using human-readable expressions:

"every 3h"run every 3 hours"every 30m"run every 30 minutes"daily at 09:00"run once per day"on-demand"manual only, never auto-triggers

Agents can also request their own scheduling.

🔄 Evolution Cycles

Each cycle follows a structured 4-phase process:

Research — Agents use tools (curl, scripts, APIs) to gather information

Output — Produce a focused deliverable (report, analysis, briefing)

Self-Improvement — Build better tools, refine techniques, update tactics

Journal & Commit — Record observations, commit changes, push to Git

🎯 Cycle Types

Agents choose their cycle intensity each run:

Deep: Full research + analysis + output. For new data or important findings.

Maintenance: Quick scan, update goals, check messages. For steady-state periods.

Skip: Emit a one-line status and yield. When nothing has changed.

🎯 Goal Decomposition

Agents decompose their INPUT.md priorities into trackable sub-goals in GOALS.md. The web dashboard shows:

Color-coded progress bars (green ≥75%, amber ≥40%, red <40%)

Sub-task completion counts

Per-goal breakdown with expandable subtask lists

📡 Inter-Agent Communication

Agents aren’t isolated. They share a bulletin board for broadcasting messages and can read other agents’ output for cross-pollination. They also have:

Directed messaging: Send messages to specific agents via the API

Threaded conversations: Multi-turn exchanges in

agents/shared/threads/Cross-agent knowledge graph: Typed entities with relationships shared across all agents

🌐 Cross-Agent Knowledge Graph

Agents contribute structured entities to a shared knowledge base at agents/shared/knowledge/:

Typed entities (technology, threat, vulnerability, trend, organization, concept)

Typed relationships between entities

Visual graph in the System tab with color-coded cards

Agents reference and build on each other’s contributed knowledge

🛡️ Safety & Sandboxing

File whitelist: Each agent can only modify files within its allowed paths

Protected files: Critical files can never be changed

Build verification: Every code change must pass build + tests before committing

Automatic revert: Failed builds trigger automatic source rollback

Session timeout: Hung processes are killed and retried automatically

📊 Context Budget Management

Agents don’t lose their minds as journals grow. The system automatically:

Keeps the last 3 journal entries in full detail

Summarizes older entries to single-line references

Archives complete history for reference (toggleable in the web dashboard)

Prevents context window overflow

🧠 Session Continuity

Agents save their thinking state at the end of each cycle, current focus, open threads, blockers, confidence level, cycle type, dimensional self-grades, expertise tags, and scheduling hints. Next cycle, they read it back and pick up where they left off.

🔧 Skills System

Global skills in

skills/— available to all agentsPer-agent skills in

agents/<name>/skills/— agent-specific toolsAuto-generated manifest (

skills/MANIFEST.md) — agents know what’s availableSkills include: journal writing, code review, documentation generation, test generation, vision analysis, memory search, and more

📋 Skill Request Pipeline

Agents can request new capabilities they can’t build themselves:

Agent writes a request to

agents/shared/requests/with type, priority, and justificationSelf-filter rules prevent lazy requests, agents must explain why they can’t build it themselves

You review requests in the dashboard Approvals tab, see who’s asking, what they need, and why

Approve or Deny with one click

Approved requests route to the system developer agent as a task

📈 Reputation & Trust System

Each agent has a computed reputation score (0-100) based on:

Grade history average (contributes up to 70 points)

Grade consistency (contributes up to 30 points)

Displayed as a color-coded progress bar in the dashboard

📊 Dimensional Self-Assessment

Agents rate themselves across 5 dimensions each cycle:

Depth: How thoroughly the topic was explored

Relevance: How relevant to current priorities

Novelty: How much new information vs. repetition

Actionability: How useful and actionable the findings

Quality: Overall writing and analysis quality

Displayed as horizontal bar charts in the web dashboard overview.

🏷️ Expertise Tags

Agents declare their areas of expertise via expertise_tags (e.g. ["threat-intelligence", "content creator"]). Displayed as branded pill badges in the dashboard.

👍 Creator Reactions

React to any agent’s output with 👍 (useful) or 👎 (needs improvement) directly from the Output tab. Reactions are saved and fed back into the agent’s next cycle prompt — closing the feedback loop.

📝 Self-Modification (TACTICS.md)

Agents maintain a self-evolved playbook called TACTICS.md where they record:

Approaches that produced high-graded outputs

Patterns the creator responded positively to

What NOT to do (approaches that led to low grades)

Preferred output format and style

📈 Growth Narrative

Each agent gets an auto-computed growth summary showing: cycle count, average grade, trend direction (↑/↓/steady), and creator reaction counts. Displayed in the Overview tab.

⏰ Temporal Awareness

Agents know when they last ran and can see time-since-last-run. They can request specific scheduling:

"15m","30m","1h","2h","4h"— request to run again soon"skip_next"— ask to be skipped next cycle

🛟 Failure Recovery

Prompt-level instructions ensure agents gracefully handle failures:

Unreachable data sources → use cached data and note it

Failed approaches → try alternatives instead of repeating

Can’t produce full output → produce status update instead of nothing

Blocked → set

blocked_onin state.json with clear explanation

⚡ Concurrent Evolution

Multiple agents can evolve simultaneously. The scheduler launches all agents whose timers have expired, no waiting for one to finish before starting the next.

📝 Output Auto-Grading

After each cycle, the system automatically scores the agent’s output on a 1-10 scale using a lightweight LLM. Grades are tracked and displayed on the dashboard with trend sparklines.

📈 Adaptive Learning Loop

The system tracks output quality grades over time: if an agent’s grades are declining, it auto-injects corrective feedback. If improving, it reinforces the current approach. Agents self-correct without creator intervention.

🔔 Event-Based Triggers

Agents can respond to triggers such as being messaged by another agent, or an agent providing more information to its knowledge graph.

💰 Cost Tracking

Per-cycle costs are logged for each agent. Track spending over time and identify expensive cycles.

🔍 Memory Search

Agents can search their own past journal, learnings, scratchpad, and outputs.

Nice to have you back, wishing you the best

Where do I fine this AVI software? I’m working in Claude coworker for the first time this week to build my first agent and it’s been a slog.